Implementing Ontology-Based Deductive Databases for Real-Time Insights

From Architecture to Deployment

Deploying an ontology-based deductive database is a structured engineering process, not a research exercise. The technology has matured to the point where implementation follows repeatable patterns, and the failure modes are well understood. Most failed implementations share a common characteristic: they begin with an ontology scope that is too broad, attempting to model an entire enterprise domain before any operational value has been demonstrated.

A sound implementation starts narrow and expands deliberately. The first deployment should address a specific, high-value problem where the cost of semantic debt is visible and measurable: a regulatory reporting process with persistent reconciliation issues, a product knowledge system where inconsistent classification drives downstream errors, or a risk model that requires cross-domain inference that SQL cannot express without prohibitive complexity.

The goal of the first deployment is not to build a comprehensive enterprise ontology. It is to demonstrate operational value, develop team competency, and establish the integration patterns that will scale to broader domains.

Ontology Development: Engineering Discipline, Not Academic Taxonomy

Enterprise ontology development is often approached as a taxonomy exercise. Business stakeholders are interviewed, terms are collected, and a hierarchy is assembled that reflects how people think about the domain. This approach produces documentation, not an operational knowledge base.

An ontology suitable for a deductive database must meet different requirements. Concepts must be defined with sufficient formal precision that the inference engine can apply rules consistently. Relationships must be declared with their logical properties (whether they are transitive, symmetric, or functional) so that deductive reasoning produces correct results. Axioms must be grounded in business rules that the organization actually enforces, not idealized classifications that reflect how practitioners wish the domain worked.

This requires collaboration between domain experts who understand the business rules and knowledge engineers who understand the formal requirements of the target system. The collaboration is iterative. Initial ontology drafts will contain ambiguities that only surface when test queries produce unexpected results. Resolving those ambiguities (sharpening definitions, clarifying relationship semantics, adjusting rule conditions) is the core of ontology engineering work.

The output of this process is not a diagram. It is a formal specification that the database can execute.

Deductive Inference: Architectural Implications

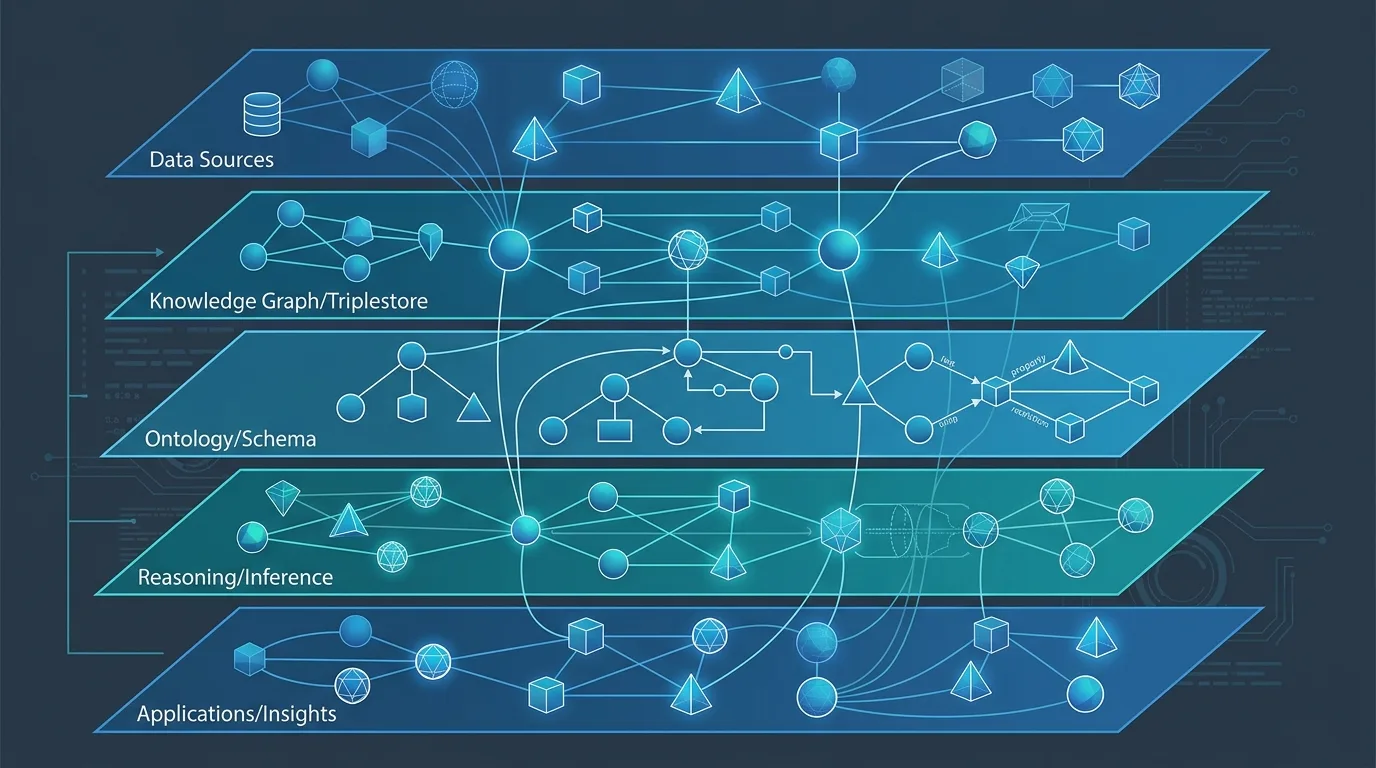

Deductive inference introduces architectural considerations that are absent from conventional database deployments. The inference engine must materialize derived facts (either at query time or through pre-computation) and the choice between these approaches has significant performance implications.

Forward chaining materializes inferred facts at ingestion time: when new data enters the system, the inference engine immediately computes all derivable facts and stores them. This approach minimizes query latency because queries retrieve pre-computed results. The tradeoff is storage overhead and ingestion latency, which increases with ontology complexity.

Backward chaining defers inference to query time: when a query arrives, the inference engine works backward from the query goal to determine which base facts and rules are needed, then evaluates them on demand. This approach reduces storage requirements and ingestion overhead. Query latency is higher and less predictable, particularly for queries that trigger deep inference chains.

Hybrid approaches (materializing frequently queried inference results while deferring others) are common in production deployments. The right balance depends on query patterns, data volatility, and latency requirements. These parameters should be established before implementation begins, not discovered during performance testing.

Integration with Existing Data Systems

An ontology-based deductive database does not operate in isolation. Enterprise deployments require integration with source systems, ETL processes, and downstream consumers. Each integration point presents semantic challenges.

Source system data arrives with implicit schema assumptions that must be mapped to ontology concepts. A customer record in a CRM system may include fields that correspond to multiple distinct concepts in the ontology: contact, account, legal entity, billing relationship. The mapping layer must make these correspondences explicit and handle the cases where source data is incomplete, inconsistent, or ambiguous.

ETL processes for semantic systems differ from conventional data pipeline patterns. In addition to moving data, they must assert or validate ontological relationships. A pipeline that loads contract data must not only populate contract records but must assert the relationships between contracts and the parties, products, and regulatory categories they involve. Errors in relationship assertion are semantic errors that will produce incorrect inference results, and they require validation logic that conventional data quality tools are not designed to detect.

Downstream consumers (analytics platforms, reporting systems, application APIs) query the semantic system through interfaces appropriate to their architecture. SPARQL is the standard query language for RDF-based semantic databases; OWL-based systems may expose query interfaces based on description logic. Applications that cannot use these natively can be served through translation layers, though translation introduces complexity and should be designed deliberately.

Operational Monitoring and Ontology Maintenance

A deductive database in production requires monitoring capabilities that go beyond conventional database metrics. In addition to query performance, ingestion throughput, and storage utilization, operations teams need visibility into inference health: are rules firing as expected, are derived fact counts consistent with data volumes, are any inference chains producing anomalous results?

Ontology maintenance is an ongoing operational responsibility, not a one-time deployment activity. Business domains change. Rules that correctly reflected business logic at deployment time will eventually require revision. Adding a new concept or relationship to the ontology requires evaluating whether existing rules remain correct in the expanded domain. Removing or redefining a concept requires identifying all rules and queries that depend on it.

Version control for ontologies follows the same principles as version control for code but with additional semantic considerations. A change that appears syntactically minor (adjusting the domain of a property, refining a class definition) can alter inference results globally. Ontology change management should include automated testing against known inference outcomes, not just schema validation.

Measuring Implementation Success

The value of a deductive database deployment is most clearly measured through the problems it eliminates. Before deployment, document the specific failure modes driving the implementation: integration logic that requires manual maintenance when business rules change, analytic queries that cannot be expressed without custom application code, governance policies that cannot be enforced consistently across systems.

After deployment, measure improvement against those baselines. A reduction in the engineering effort required to maintain integration logic is a direct measure of the value delivered by moving that logic into the ontology. An increase in the fraction of analytic questions answerable without custom development measures the effectiveness of the inference layer. Consistent policy enforcement across systems measures the governance benefit.

These metrics make the case for expanding semantic architecture to additional domains, the necessary condition for capturing the compounding benefits that broad ontology coverage enables.

Related reading: Breaking the 1970s Database Cycle: Why Enterprises Need Semantic Technology | Semantic Federation: Integrating Legacy Data Systems